DavidStables (talk | contribs) |

DavidStables (talk | contribs) |

||

| Line 180: | Line 180: | ||

Once Loaded, reformatted and super-indexed. The address database is ready to be used | Once Loaded, reformatted and super-indexed. The address database is ready to be used | ||

=== Candidate address | === Candidate address pre-formatting === | ||

It is assumed that an address is submitted as one or more delimited fields with the post code at the end of available. Optionally, an area based qualifier is included, which is useful of there is no post code. For example a practice post code or simply a list of major postcodes. These narrow down the options when checking against 100 million+ addresses. | It is assumed that an address is submitted as one or more delimited fields with the post code at the end of available. | ||

Optionally, an area based qualifier is included, which is useful of there is no post code. For example a practice post code or simply a list of major postcodes. These narrow down the options when checking against 100 million+ addresses. | |||

Candidate addresses are subject to the same reformatting rules as standard addresses (spell checking etc). However this needs to be done in two stages | Candidate addresses are subject to the same reformatting rules as standard addresses (spell checking etc). However this needs to be done in two stages | ||

Revision as of 11:30, 4 June 2020

Overview

Address matching algorithm uses human semantic based pattern recognition, manipulation and matching judgement as it's matching technique, supported by a few machine based algorithms such as the Levenshtein distance algorithm

Firstly, some definitions of terms used:

| Term | Description |

|---|---|

| Candidate address | The address string submitted by a user or a subscriber system for matching |

| Standard address | An address that an organisation, considered to be an authority, has stated as referring to a real location. |

Matching a candidate address to a standard address consists of a process whose objective is to reach a high level of confidence that the candidate address refers to the same location as the standard address.

When attempting to match a candidate address to one standard address from a set of standard addresses there are two objectives. If both objectives are achieved, the address is said to be matched. The objectives are:

- To reach a high level of confidence that the candidate address refers to the same location as the standard address.

- To judge that the standard address that has been matched to, is the most likely from all of the addresses in the set to refer to the same location.

It can be seen that the objectives include two relative measures, confidence and judgement. A question arises as to whether it is possible for these measures to be mathematically or statistically based, or not.

It does not take long to show the fundamental problem with address matching.

Consider the following candidate and standard addresses

Candidate :Flat 1,15 high street, YO15 5TG Standard :Flat 1,15 high street, YO15 5TG

By any computable measure, e.g. length of string, character position match, it can be seen that these two addresses are identical. One can easily deduce from this that there will be a high level of confidence that they refer to the same location. Also, as it is not possible for any other address to be more similar, a sound judgement can be made that this is the most likely from all of the addresses, except for the other identical addresses in the set.

Consider the following

Candidate :Flat 1 ,15 high street YO15 5TG Standard :Flat 1, 15 high street YO15 5TG

One can see that they are different. Whilst both have the same characters, the position of character 7 and 8 have been transposed. Yet a human being would almost certainly say they refer to the same place. Also, unless there is another address that is exactly the same as the candidate, the match is the most likely.

Consider the following:

Candidate :Flat 1,15 high street YO15 5TG Standard :Flat 11, 5 high street YO15 5TG

Like the above example there has been a transposition at position 7 and 8. Yet they are obviously different addresses. They are very unlikely to refer to the same location unless there were no other flats or numbers on the street. Even If there were no other numbers or flats on the street then the confidence level may still only be moderate. Not enough to match?

So it can be seen that in fact it is semantics that drive matching judgements and not just positional variations and character mismatches. Likewise:

Candidate :15 Flat a High Street YO15 5TG Standard :Flat a 15 high Street YO15 5TG

These two addresses are very likely to be the same unless a closer fit can be found. A closer fit is NOT a closer fit simply by character matching.

Candidate :15 Flat a High Street YO15 5TG Standard :1 Flat 5a high street YO15 5TG

These are also quite close but clearly semantically different.

So it can be seen that in nearly all cases, when comparing one address with another, or an address against a set of addresses, it is the semantic interpretation of the address that determines the match. Human based semantic interpretation is still more reliable than AI for language-based judgements.

It follows that address matching rules are nothing more than trying different addresses on for size and see which one a human being thinks means the same or a similar thing.

Pattern recognition and manipulation

What does the computer do? Basically it computerises the process of human pattern recognition and human suggested string manipulation.

Firstly, we know from our own experience that words that are similar or the same words in different orders often mean the same thing. We also know that letters and numbers can be transposed without too much loss of meaning. We also know that misspelled words when corrected usually mean the same thing.

For example, we make a judgement that the word 'flr', in the context of an address, is likely to mean ‘floor’. In a cookbook though it might mean “flour”. Also we know that the same meaning can have different words or spelling such as 1st, and first. Often different punctuation means more or less the same '5/6' and '5-6' etc.

From this knowledge we can set pattern recognition rules. We can say that full stops can be removed or '/' replaced with '-' because we know that they are unlikely to affect the semantics.

However, there are always twists. It is reasonable to infer that 'st' is likely to be short for 'street'. However, the phrase 'St Katherine's way' implies a saint, not a street. Therefore the manipulation rules must also take account of potentially wrong manipulations. In this case, a 'street' can be recognised by checking the resulting expansion e.g. ('high st -> high street') against a standard street index, or the position of the 'st' in the string. These patterns and manipulations are coded and validated and adjusted if wrong manipulations are discovered.

Human judgement based manipulations can result in false positives. As the algorithms are developed the introduction of false positives must be checked regularly and the manipulation rules adjusted.

Rules,based on knowledge of the world can be quite clever. Knowing that a “top floor flat” is more likely to be the same as “flat c” from a list of a, b, c than “flat a”, gets a preference from a set of options.

Address components

Addresses have more than a dozen semantically different components. Name of a flat, number of a flat, number and letter of a flat, range of numbers for a flat, a building name, a building number, a building number and letter, a range of numbers with or without letters, a dependent thoroughfare, a street, a dependent locality, a locality, a town, a city and a post code. On top of this there are the descriptions in relation to the front or back of the building, the level within a building or whether facing east or west, north or south. There are many words to describe flat like units, maisonettes, studios, houses, apartments and so on.

With address labels with up to around 10-12 semantically different field meanings and with human tendency to place words in the wrong fields and in the wrong order in the wrong fields, or inadvertent field separators means that field allocations cannot be relied on. manipulation rules take account of wrong allocations. In some cases users submit address with everything in one field. Manipulation must take account of no user determined address fields at all.

With each manipulation comes a human judgement that the resulting string is still a true reflection of the candidate and that a match to a standard would be correct. It is only human judgements that can be used to match addresses.

There are a few useful mathematical techniques. Pluralisation and de-pluralisation. Use of Levenshtein distance algorithm with allowable distances roughly proportional to the length of the words can help. Partial matching of words with front part matching (as humans often get the end of phrases wrong rather than the start) helps also.

However, these are just techniques to speed up what would otherwise be a manual process relying in eyesight, concentration and a high boredom threshold.

Conclusion on approach

A computer only speeds up the process of pattern recognition and string manipulation that would otherwise by done by a human being, each manipulation being specifically undertaken with a view to enable a visual check that would result in a match.

If a particular manipulation falls short of something that a human knows would match, the manipulation is abandoned and another one tried. The usual approach is to start with part of the address that seems to match, make small manipulations initially, then if no matches are found, increase the degree of manipulation. There is no useful machine-based algorithm based on a purely mathematical functions. Whilst a mathematical function like transpositions may highlight likely character matches (and there is an association between character matching and semantic meaning) relying only this approach would reduce possible semantic matches achieved by larger distance comparisons using human judgement.

The most significant problem is what to do when manipulations build on manipulations that are already dubious. I this case the level of confidence drops at this point and the process can start again from a different starting point, repeated until the algorithm author has judged that one set of manipulations give higher confidence than another. This results in a series of rankings for each set of manipulations. If the rankings are in the wrong order, then a premature match might be made, resulting in a false positive match. Adjusting the ranking uses the same type of judgement as adjusting the manipulation.

Address matching processes

Firstly some of the terms used are defined. This table lists the main terms used in the description

| Term | Description |

|---|---|

| Subscriber | An organisation (or system that is operating on behalf of an organisation) that is seeking to access data from the Discovery service |

| Standard address | An address provided by an authoratitive body that has got an assured link to a UPRN |

| Candidate address | The address string submitted by a user or a subscriber system for matching |

| UPRN | Unique property reference number as supplied by the ordnance survey organisation |

| Address based premium | The set of databases as provided by the Ordnance survey holding the UPRNs and several addresses for each |

| Equivalent match | The UPRN is deemed to be equivalent to the property as described by the submitted address |

| Sibling match | The UPRN is assigned to a property nearby e.g. next door |

| Sub-property match | The UPRN is assigned to a “higher level property than the submitted address.

For example if the submitted address wasflat 1 and the UPRN was assigned to flat then this would be a supra-property match |

| Sub property match | The UPRN is assigned to a lower levelproperty than the submitted address.

For example if the submitted address was flat 1 and the UPRN was assigned to flat 1 a then this would be a sub property match |

| DPA file | The Post office Delivery point address file |

| LPI file | The Local Property identifier file |

The Standard address database

Source and nature of standard addresses

The Ordnance Survey (OS) has a product; ‘Address Base Premium (ABP)’ which contains information about every UPRN. It contains three main files, with each entry in each file assigned directly to a UPRN

- A Delivery Point Address file (DPA) which lists all the Royal Mail addresses sourced from Royal Mail’s PAF (Postcode Address File) which is a non-geocoded list of addresses that mail is delivered to.

- A Land Property identifier file (LPI) which lists geographical addresses as maintained by contributing Local Authorities. They represent the legal form of addresses as created under street naming and numbering legislation. The structure of a geographic address is based on British Standard BS7666.

- A Basic Land Property Unit file (BLPU) which lists all the unique properties, UPRN, post code and geographical coordinates.

There can be more than one version of the LPI geographic address for a UPRN, capturing approved, alternative, provisional and historical (inactive) versions of the DSI exclude status 8. All are included as potential matches

address. All are available for matching.

These files are considered as the standard address details for each UPRN. Whilst each file represents addresses in a slightly different way, they are aligned with each other. The LPI file contains entries that are often more granular than the DPA file. Some UPRNs only exist in the LPI file and not in the DPA file and vice versa. Thus the LPI file can usually be considered as the more definitive of the two but a match to either can be considered correct.

In addition to a difference in the level of detail in the two files there is sometimes a relationship between one UPRN and another in that one UPRN can be a “parent” UPRN of a more detailed property. For example a parent UPRN may be assigned to a building whereas a child UPRN is assigned to a flat within a building. Both the parent and the child UPRN may exist in the DPA file and the LPI file.

In effect this means that there are two sources of the truth for an address, and matching to either source provides the UPRN. It is important to map to a child UPRN where possible as the lowest level detail better represents actual households.

Loading addresses, formatting, indexing and custom indexes

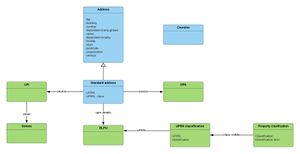

Addresses are loaded into the database from source (See green classes).

Each source standard address is reformatted into a standard address object, which is an instance of a class that extends the address class by dint of having a UPRN and status. The objective of the reformatting is to produce a single model of an address for matching to which candidate addresses will also adhere.

Format also includes standardisation of the use of suffix and ranges e.g. 1-2, 1a, 1a-1f.

Formatting of the standard address also includes 'fixing' some errors and removing some extraneous words that are unnecessary in the matching process. These include:

- Spelling corrections, modified by context

- Replacement or removal of punctuation and lower casing

- 'Flat' removals, involving the removal of terms from the flat field that signal a flat are can be considered equivalent e.g. flat, apartment, towers, rooms, flat no, unit workshop, maisonette. Some special flat terms are retained for potential removal later in a leaf manipulation e.g. 'studio'

Addresses are then indexed in a number of ways:

- Secondary index on post code street and building name columns

- Multiple Composite indexes on post code, street, number, building flat & post code building & building flat and post code

- Functional indexes on the above including concatenation, de-pluralisations

Once Loaded, reformatted and super-indexed. The address database is ready to be used

Candidate address pre-formatting

It is assumed that an address is submitted as one or more delimited fields with the post code at the end of available.

Optionally, an area based qualifier is included, which is useful of there is no post code. For example a practice post code or simply a list of major postcodes. These narrow down the options when checking against 100 million+ addresses.

Candidate addresses are subject to the same reformatting rules as standard addresses (spell checking etc). However this needs to be done in two stages

- Before the address fields are populated

- Once more after the address fields are populated

Using human example driven pattern recognition a set of manipulation rules are followed that result in an address object being populated. There are hundreds of rules to follow. Many rules manipulate data when new patterns are recognised following from previous manipulations.

For example, Let us assume the following pattern and manipulation occurs

flat 1 St Paul's house 15 high street

Pattern recognition= street preceded by number manipulation = populate number and street

number_street = 15 high street

However, consider a different example that requires additional manipulating

flat 1 St Paul's house 14- 15 high street

Pattern recognition= street preceded by number manipulation = populate number and street resulting in

number_street = 15 high street

Which is wrong, and therefore a subsidiary pattern of two numbers, with or without a dash, making sure that there is a flat number already allocated, and the number street is therefore

number_street= 14-15 high street

Iterative Pattern recognition together with manipulation results in >100 variations which never complete the number of possible manipulations needed.

The curve of the number of manipulations needed to improve the match detection rate by a certain percentage is exponential.

Matching candidate to standard addresses

Match failure circumstances

It is not always possible to match a Discovery address exactly to a specific UPRN. There are 3 reasons why a Discovery address may not match

- It is a false address i.e. the address does not actually exist in reality and cannot therefore be matched

- There may not be sufficient information in the candidate address for it to match at the level of detail required.

- The Discovery address may contain more detail than the standard address and is therefore too accurate

There are missing entries in the DPA and LPI file. The files are constantly being updated and released every 6 weeks. There is a massive amount of building going on. Even changes to post codes can occur and the DPA file (which contains the post code) may be out of date or wrong.

Qualified matching

As well as matching to a UPRN there is also requirement to qualify the relationship between a Discovery address and the UPRN. We refer to these qualifiers as “approximation qualifiers” as they mean that the UPRN is geographically close to the property. Each of the main 5 address fields is assigned a qualifier. The five qualifiers are:

- Equivalent match i.e. we believe the property is the property that the UPRN refers to

- Near match. We believe the property is the property that the UPRN refers to but the flat or property may be split. This only applies to matches where the flat or number suffix in either the Discovery or the ABP addresses may be ignored or dropped. This is in effect equivalent.

- Child - Sub- property. The Discovery address represents a sub-property of the UPRN property. For example the Discovery address “flat 1a Eagle house” may only match to the higher level DPA or LPI entry of “Eagle house”

- Parent. The Discovery address represents a supra-property (parent) of the UPRN property. For example the Discovery address ‘1 Angel Lane’ does not have an equivalent in DPA or LPI but ‘flat 11, 1 Angel Lane’ does exist so it matches to a more detailed identifier qualified as a supra-property

- Sibling. The Discovery address may represent a sibling of the UPRN property. For example the Discovery address ‘flat 12 , 1 Angel Lane’ may not exist in either ABP file but ‘flat 11, 1 Angel Lane’ does

Qualifiers are assigned in relation to the final post manipulated address match.

It should be noted that approximation qualifiers are used only when the level of matching arrives at the level of a street number or a more detailed approximation to a building or flat. Simply matching to a street is not considered a match and the address.

Poor quality addresses

Candidate addresses are checked for quality. A poor quality address is more likely to remain unmatched, but a quality indicator is assigned whether matched or not. Poor quality indicators include:

- null address lines. i.e. all address lines are null

- The entire address line is too short (<9 characters)

- The post code is missing

- The post code is in an invalid format

Matching algorithms

To formalise the judgement a ranking can be applied to each set of rules that perform a match e.g. (Rank 1 – Rank 80)

A Rank 1 rule would represent an exact match of all fields (taking into account DSBefore

GHIs address cleaning carried out before running the algorithm, or on the fly as part of the algorithm?

spelling corrections and other standard text cleaning methods). A Rank 80 would represent an approximate match based perhaps on fuzzy matching of 2-3 fields and a parent approximation.

By applying the rules in Ranking in order, this avoids matching prematurely with a fuzzy match when a better match exists. It is the false GHYes, address matching is susceptible to high false positive rates

positives that create the problems as the matching gets harder.

This matching process can be considered as a series of recursive reductions in the list of probable matches, recursion to continue until the list can be narrowed to the maximum extent possible. At that point a judgement is made as to whether there is a match with a qualifier, or no match. In addition, during the matching process, performance enhancements are made to enable short cuts.

The starting point is that a submitted address has a potential list of matching candidates of the entire ABP address database. However, it is impractical to match a submitted address by re-ordering the entire ABP address list each time. Therefore one prior assumption is applied. That is that the submitted address must have a DSTalking cross purposes. This is not derived from he address post code but from the requestors system when they make the request. A practice in Cardiff will know their area codes. I suggest they make one request batch per area. It is a vital performance thing I think. So in East London there would be one for ‘e’, one for ‘ec’ one for ‘nw’ etc

GHDo you mean the postcode district must match?

This narrowing of the potential matches by postcode district is called ‘blocking’ and is good practice. However, I have found matches using only the address and not the postcode, but very small number

post code district e.g. ‘E’ or ‘EC’ or ‘YO’.

The algorithms described in this specification consider how to optimise the sort process without creating false approximate matches.

=== Address Fields for matching ===ABP address files contain quite granular field definitions. Discovery address files consist of 1-5 address lines and a post code with each address line not necessarily reflecting the correct address line or field values.

The Discovery address is DSSent you the algorithm in mumps th other week. There have been some enhancements. The parsing algorithms are just as complex as the matching algorithms but more useful. By pre-cleaning the address (on the fly) it massively reduces the number of matching algorithms needed

GHHow do you do this? Do you use parsing or just a lookup?

parsed into the logical address fields represented by the ABP files.

It is assumed that the reader has read and understood the ABP file documentation.

The following fields are taken into account when matching.

Fig 2 – DSThat’s the parsing algorithm. These fields are derived from the rubbish that is sent. At the moment it assumes at least address lines (e.g. 1, 2,3 or 4). Nevertheless , If they are going to be sent as one block then we will need to extend the address parsing to cope. I don’t think that will be a problem though as in any event the matching algorithms shuffles the fields. In other words, best guess improves performance but algorithms take account of them being rubbish

GHIf this algorithm is potentially going to be used for address matching other address data in the future, e.g. local authority addresses, this is rarely separated into address lines, but comes as one concatenated address. How would you parse this?

Address fields for matching

| Discovery formatted field | ABP equivalents |

| Post code | DPA -> BLPU – GHThis should come from the BLPU tablePost code

LPI –> BLPU Post code |

| Locality | DPA – locality

LPI –> Street Descriptor-> Locality |

| Dependent locality | DPA- dependent locality |

| Street | DPA- thoroughfare

LPI – > Street Descriptor / Street |

| Dependent thoroughfare | DPA- Dependent thoroughfare |

| Number | DPA – Building number

LPI – Primary address object – number or range made up of Pao start+ pao suffix ..{+- “-“} +pao end + pao end suffix e.g. 45a-56b 45 |

| Building name or house name | DPA – Building

LPI – Primary address object – pao text |

| Flat number and flat number prefix | DPA – sub building

LPI – Secondary address object number or range made up of either Sao start+ sao start suffix +- {“-“} + sao end + sao end suffix e.g. 1a- 1b 1a 1 Or sao text e.g. flat 1 |

=== Field and content manipulations ===The matching algorithms dictate how the fields and their contents are manipulated to match to the ABP.

==== Spelling and word replacement ====As part of the algorithms there are a set of generic methods that can be applied to content at different times in the GHCheck if I have any additional

algorithms

1) Lower case if case dependency 1) Removal of ‘ with null 1) Replacement of . with ‘ ‘ ( replace full stop with space) 1) Replacement of / with - (replace slash with dash for flat ranges 58/60 58-60 1) Leading and trailing spaces removed 1) Replacement of ‘ ‘ with ‘ ‘ i.e. double space with space 1) Spelling DSBoth abbreviations and some spellings

GHAddress abbreviations corrections (e.g. flt-> flat bld ->building ) from spell correction list

1) Word swapping for equivalent words (e.g. house-> building, apartment -> building, nursing-> care, upper->first) 1) Plural/ singular correction i.e. removal of trailing ‘s’

For a the flat field starting with ‘flat’ ‘DSYes unit workshop

GHAnd ‘apartment’

room’ etc then these can be removed as they are used inconsistently.

i.e. Flat field = ‘Flat 1a’ and Flat field = ‘1a’ and Flat field= ‘room 1a’ would be considered equivalent

==== Levenshtein distance ====For non-exact rankings, equivalent spellings are checked using the GHCan you give examples please?

Thorngrove road, thrngrove road. Levenshtein distance = 1

Levenshtein distance algorithm whereby

1) A distance of 1 is considered acceptable with a minimum phase length of 10 1) A distance of 2 with a minimum phrase length of 10 is acceptable 1) A distance of 3 with a phrase length of > 9 would be acceptable 1) Parameterised maximum distance and length e.g. post code would be 2, with a minimum length of 5

==== Formatting addresses ====In order to optimise matching both the inbound Discovery addresses and the ABP candidate addresses should be formatted to the same address class using the same formatting algorithms.

Discovery addresses can be formatted when submitted. However, as a performance improvement DSI meant creating things like 19a-19b from the secondary address fields to improve performance. It isn’t logically required, just a performance boost (10 fold if used in indexes)

DS

GHDoes ABP need formatting? Can you give an example?

ABP addresses should be pre-arranged to the same common format (as described above) and stored before any address is submitted.

Methods such as character and space removal and spelling corrections and flat suppression should be applied during the formatting before storage. Other algorithms such as plural/ singular may be applied to indexing and other algorithms such as word swapping Levenshtein distance applied to Discovery addresses at run time.

N.B Towns and cities may be GHAgreed.

ignored as it is assumed that the address submission interface also contains an area location parameter list such as the district part of the post code (e.g. e+ ec + for east and central London)

==== Creating address fields from LPI and DPA files ====The flat, building, number, dependent thoroughfare, street, dependent locality, locality and post code can be created from the LPI and DPA files using the field specification.

For example where an LPI has secondary address fields, of start, suffix, end ,suffix:

e.g. saos=1, saosf=a, saoe=4, saoef=4b then the flat ‘1a-4b’ range may be created

==== Creating address objects from address lines ====This function applied both to Discovery addresses (variable number of lines) and ABP addresses (fixed number of lines)

Firstly deduce the start and end line count in order to avoid combining town or city lines into the core 4 fields.

Return Class is Address containing , Flat, Building, number, street, post code

$format (address lines, address) // address lines, at least 2 with the last being the post code

Lower case the address

Strip double spaces, and remove /.’, characters

Line count is the number of lines -1 (i.e lines before post code)

== Preparing the environment ===== Database preparation ===It is necessary to prepare the database and prepare the APB address entries for optimisation. This consists of 4 stages.

==== ABP files ====ABP files are documented and should have referential integrity. The following tables are significant

1) BLPU table

1) Street descriptor table

1) DPA table

1) LPI table

Load files, lower case, removal of “., ’ characters

Note, as submissions will have an area prefix, that it may be preferable to shard by first post code character in order to:

1) Enable rapid updates to one section from the ABP source 1) Lateral scalability 1) Development and Beta testing

==== Indexing strategies ====The following indexes are useful:

1) Post code 1) UPRN 1) USRN+ Language for Street description (welsh and English street names for same USRN)

==== Pre-prepared ABP addresses ====It is useful to create consistent ABP addresses based on an the DPA layout but populated with the LPI details as follows:

1) UPRN 1) Table source (DPA or LPI) 1) Table primary key (FK to LPI or DPA table no RI) 1) Flat (including range and suffixes e.g. 90a-90c) 1) Building 1) Number (including range and suffixes 1a- 3c) 1) Dependent thoroughfare (DPA field, null if LPI) 1) Street 1) Dependent locality (DPA field, null if LPI) 1) Locality 1) Post code 1) organisation

Town or city are not required

==== Prepared address indexes ====The following compound indexes (including null values) may be useful against the prepared address table

1) Post code, street, number, building, flat 1) Post code, dependent thoroughfare, number, building, flat 1) Street, number, post code 1) Building, flat, post code 1) Organisation, flat, post code

==== Concatenated address index ====For exact matching an index of concatenated address fields with removal of double spaces and leading and trailing spaces is useful

A concatenated address

Post code + ‘ ‘ + flat + ‘ ‘ + building + ‘ ‘ + number + ‘ ‘ dependent th + ‘ ‘ + street + dependent locality + ‘ ‘ + locality

==== Correction lists ====The following correction tables are useful

1) City list e.g. London 1) Known word corrections e.g. flt -> flat, cr -> crescent 1st= first 1) Flat equivalent word list e.g. flat, room, apartment 1) DSNot sure about this now. I think it’s a hang over.

GH? Drop word list e.g. ‘the’

1) “Is a DSYes, but got most of them I think

GHCan use ABP table 15_STreetDescriptor to help with this road” list e.g. road, avenue, street, grove, row, drive, walk, way, square

1) Swap lists where one word may be swapped for another if in the candidate e.g. apartment -> building, road -> street, nursing -> care, upper-> first 1) Number equivalence list

Matching

The idea is to produce one candidate UPRN and address from the ABP addresses indexes.

Algorithms proceed in rank order, the ranking designed so that if an address is found, this would be the most likely. Algorithms may produce more than one match for one algorithm, in which case the preference algorithm is the run.

When a match is found, the process may stop. Not all matches are equivalent and there are grades of likelihood, divided into different levels of approximation or qualifiers.

There are 5 qualifiers of a match, each with a spectrum of probability (likelihood)

1) Equivalent match i.e. we believe the property is the property that the UPRN refers to 1) Near match. We believe the property is the property that the UPRN refers to but the flat or property may be split. This only applies to matches where the flat or number suffix in either the Discovery or the ABP addresses may be ignored or dropped. This is in effect equivalent. 1) Sub- property (or child). The Discovery address represents a sub-property of the UPRN property. For example the Discovery address “flat 1a Eagle house” may only match to the higher level DPA or LPI entry of “Eagle house” 1) The Discovery address represents a supra-property (parent) of the UPRN property. For example the Discovery address ‘1 Angel Lane’ does not have an equivalent in DPA or LPI but ‘flat 11, 1 Angel Lane’ does exist so it matches to a more detailed identifier qualified as a supra-property 1) The Discovery address may represent a sibling of the UPRN property. For example the Discovery address ‘flat 12 , 1 Angel Lane’ may not exist in either ABP file but ‘flat 11, 1 Angel Lane’ does.

=== Learning street spelling corrections ===The systems should learn potential street spelling corrections (or street equivalents) from the submitted addresses. This can then be used to find addresses where the Discovery street or building spelling is incorrect and is a performance enhancement. This may be updated prior to processing a large batch of addresses where response times are not required. For each learned spelling correction, subsequent submissions that contain misspelled streets will be more DSYes, this is how Google works.

GHYes, probably lots of opportunity for machine learning with this

efficient. However, the system may also attempt to match street equivalents in real time.

==== Pre-Population of Street name corrections ====A bulk load of streets is used to generate a list of misspelled streets and their potentially correct equivalent, the correct equivalent being a street in the ABP database.

To avoid overmatching it is worthwhile accepting the first 2 or 3 characters from a submitted street and matching to any ABP street with a distance score of 2 or less.

=== Matching steps ======= Batch publisher matching ====It is assumed that one area is DSShould have said ‘area’

GHSubmitted?

submitted at a time via the requesting API.

==== Gather area post code list ====List the area post codes submitted by the publisher via the API (e.g. ‘E’ or ‘EC’) . This ensures that duplicates are reduced by limiting the scan to the set of addresses in a post code area

==== Formatting of Submitted Discovery address ====Discovery address should be manipulated and formatted before the matching algorithms take place. Most of the matches occur as a result of this manipulation and the more effective the manipulation the higher the chance of a match.

==== Find a match ====This applies the 100+ algorithms to the Discovery address object in order to find the best match. Algorithms are run in Rank order in order to avoid a premature approximate match when a better match is available using different manipulations.

Whilst errors occur in the ABP files it is assumed that Discovery addresses are in error and it is thus Discovery addresses that are manipulated

==== Preference algorithm for more than one match of same rank ====Occasionally an algorithm can find more than one match and a decision has to be made as to which one is preferred.

==== Approved and Inactive UPRNS ====An approved UPRN are preferred over an alternative but historical (inactive) UPRN’s are included.

== Algorithms ===== Rank examples ===The flat problem.

Discovery address = 10 a Mildenhall road e50ru

Potential e50ru, Mildenhall road candidates

1) Number = 10, Street= Mildenhall road 1) Flat = basement flat, Number = 10, street=Mildenhall road 1) Flat= Ground floor flat, Number =10, street= Mildenhall road 1) Flat=First floor flat, Number = 10, street= Mildenhall road

Rank 1 --> exact field match : FAIL Not exact on number

Rank 11 -> Multi-floor inference rule: Matches to -> Basement flat, Number = 10, Road = Mildenhall road

In the Rank 11 algorithm all possible flats for that number were collected and ‘a’ matches to the lowest GH

GHMuch needed With Tower Hamlets addresses

floor

=== Ranked matching algorithms ===These are documented in the associated spreadsheet

=== Address preformatting ===Before submitting an address for matching it should be formatted to align it with the address class fields. The following table shows an address formatting algorithm in mumps

| format(adrec,address) ; ;Populates the discovery address object

;initialise address field variables n d s d="~" set adflat="" set adbuild="" set adbno="" set adepth="" set adeploc="" set adstreet="" set adloc="" set post=""

set adbuild=$p(address,d) ;By default first is house name/ building set adstreet=$p(address,d,2) ;By default second is street set adbuild=$$lt^UPRNL(adbuild) set adstreet=$$lt^UPRNL(adstreet)

i addlines=2,adstreet?1n.n,adbuild'="" d .s adstreet=adstreet_" "_adbuild .s adbuild=""

if adbno'="",adstreet?1l1" "1l.e d .I $e(adstreet)'="y" d ..set adbno=adbno_$e(adstreet) ..set adstreet=$p(adstreet," ",2,20)

eform q splitstr(oflat,obuild,obno,ostreet,adflat,adbuild,adbno,adstreet) ;Splits up building into street and vice versa n i,xbuild,xstreet f i=1:1:$l(obuild," ") d .i $p(obuild," ",i)?1n.n d ..i $$hasflat^UPRNU($p(obuild," ",i+1,i+10)) d ...s adbno=adflat ...s xstreet=adstreet ...s adstreet=$p(obuild," ",0,i-1) ...s adflat=$p(obuild," ",i,i+10) ...s adbuild=xstreet q isno(word) ;is it a number if word?1n.n q 1 if word?1n.n1l q 1 if word?1n.n1"-"1n.n q 1 if word?1n.n1l1"-"1n.n1l q 1 q 0 flatbld(adflat,adbuild) ; ;is it a flat or number and if so what piece is the rest? if $$isflat^UPRNU(adbuild) do q .set adflat=$p(adbuild," ",1,2) .set adbuild=$p(adbuild," ",3,10) .if adbuild?1l1" ".e d ..set adflat=adflat_$p(adbuild," ") ..set adbuild=$p(adbuild," ",2,20)

q numstr(adbno,adstreet) ; ;Reformat a variety of number and street patterns

isroad(text) ; if text["road"!(text["street")!(text["avenue")!(text["court")!(text["square")!(text["drive")!(text[" way")!(text[" lane") q 1 if text[" grove"!(text[" row")!(text[" close") q 1 if text[" walk"!(text[" mews")!(text["causeway") q 1 if text[" park" q 1 I $p(text," ",$l(text," "))="st" q 1 q 0 iscity(text) ; n word,done s done=0 s word="" for s word=$O(^UPRNS("CITY",word)) q:word="" d .i text[word s done=1 q done |